Artificial intelligence, hardware innovations boost confocal microscope’s performance

Enhancing the workhorse

Advertisement

Since artificial intelligence pioneer Marvin Minsky patented the principle of confocal microscopy in 1957, it has become the workhorse standard in life science laboratories worldwide, due to its superior contrast over traditional wide-field microscopy. Yet confocal microscopes aren’t perfect. They boost resolution by imaging just one, single, in-focus point at a time, so it can take quite a while to scan an entire, delicate biological sample, exposing it light dosages that can be toxic.

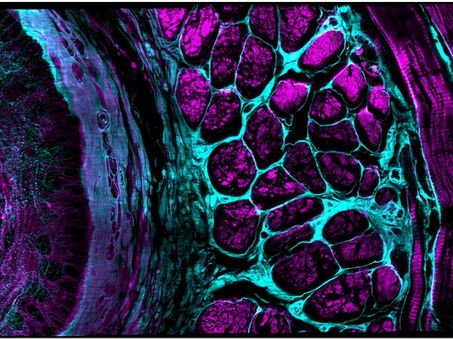

Mouse esophageal tissue slab (XY image), immunostained for tubulin (cyan) and actin (magenta), imaged in triple-view SIM mode.

Yicong Wu and Xiaofei Han et al, Nature, 2021

To push confocal imaging to an unprecedented level of performance, a collaboration at the Marine Biological Laboratory (MBL) has invented a “kitchen sink” confocal platform that borrows solutions from other high-powered imaging systems, adds a unifying thread of “Deep Learning” artificial intelligence algorithms, and successfully improves the confocal’s volumetric resolution by more than 10-fold while simultaneously reducing phototoxicity. Their report on the technology is published online in Nature.

“Many labs have confocals, and if they can eke more performance out of them using these artificial intelligence algorithms, then they don’t have to invest in a whole new microscope. To me, that’s one of the best and most exciting reasons to adopt these AI methods,” said senior author and MBL Fellow Hari Shroff of the National Institute of Biomedical Imaging and Bioengineering.

Among its innovations, the new confocal platform uses three objective lenses, allowing one to image a wide variety of sample sizes, from nuclei and neurons in the C. elegans embryo to the whole adult worm. Multiple specimen views are rapidly captured, registered and fused to yield reconstructions with improved resolution over single-view confocal microscopy. The platform also introduces innovative scan heads for the three lenses, allowing line-scanning illumination to be easily added to the microscope base.

Moreover, the team added “super-resolution” capacity to the platform (enhanced resolution beyond the diffraction limit of light) by adapting techniques from structured illumination microscopy.

“The hardware summit that gets climbed in this platform is the multiple lenses around the sample, and then the super-resolution trick, which takes a combination of hardware and computation to achieve. It’s a tour de force, but it’s a pretty phototoxic recipe. There’s a lot of light being delivered to the sample,” said co-author and MBL Fellow Patrick La Rivière of the University of Chicago.

One way to address phototoxicity is to lower the light coming from the microscope’s laser. But then you begin having problems with “noise” in the image -- background graininess that can obscure fine details of the object you want to image (the “signal”). This is where artificial intelligence comes in.

The team trained a Deep Learning computer model, or neural network, to distinguish between poorer-quality images with a low signal-to-noise ratio (SNR) and better images with a higher SNR. “Eventually the network could predict the higher SNR images, even given a fairly low SNR input,” Shroff said.

“Deep Learning allows you to take this hardware summit as the gold standard for resolution and then train a neural network to achieve similar results with much lower SNR data, many fewer acquisitions, and so much less light dose to the sample,” La Rivière said.

The team demonstrated the platform’s capabilities on more than 20 different fixed and live samples, targeting structures that ranged from less than 100 nanometers to a millimeter in size. These included protein distributions in single cells; nuclei and developing neurons in C. elegans embryos, larvae and adults; myoblasts in Drosophila wing imaginal disks, and mouse renal, esophageal, cardiac, and brain tissues. They also see potential applications for imaging human tissue in histology and pathology labs.

Shroff, La Rivière and co-author and cell biologist Daniel Colón-Ramos of Yale School of Medicine have been collaborating at MBL for nearly a decade to develop imaging technologies with higher speed, resolution and longer duration. Collaborators on this confocal platform also included Applied Scientific Instrumentation, a company they worked with both at MBL and at the National Institutes of Health.

Yicong Wu, first author on the paper, built the new confocal platform and deployed its Deep Learning approaches. Wu learned how to use Deep Learning at the MBL in the pilot version of a new course launched this year, DL@MBL: Deep Learning for Microscopy Image Analysis. (La Rivière is a faculty member in the course.)

“It’s a testament to the course that Yicong could learn Deep Learning methods in 4 days and quickly innovate with them, so we can now apply them in our lab,” Shroff said. “That’s a short feedback scheme, right? It was great that MBL catalyzed it.”

Other news from the department science

Most read news

More news from our other portals

See the theme worlds for related content

Topic world Fluorescence microscopy

Fluorescence microscopy has revolutionized life sciences, biotechnology and pharmaceuticals. With its ability to visualize specific molecules and structures in cells and tissues through fluorescent markers, it offers unique insights at the molecular and cellular level. With its high sensitivity and resolution, fluorescence microscopy facilitates the understanding of complex biological processes and drives innovation in therapy and diagnostics.

Topic world Fluorescence microscopy

Fluorescence microscopy has revolutionized life sciences, biotechnology and pharmaceuticals. With its ability to visualize specific molecules and structures in cells and tissues through fluorescent markers, it offers unique insights at the molecular and cellular level. With its high sensitivity and resolution, fluorescence microscopy facilitates the understanding of complex biological processes and drives innovation in therapy and diagnostics.